Even the smartest security tools are only as powerful as the experts who use them. That’s especially true in network security analysis, where a tool like Suricata is only as good as the rules guiding it.

Suricata is a versatile open-source engine that can operate as an Intrusion Detection System (IDS), Intrusion Prevention System (IPS), or Network Security Monitoring (NSM) platform. Its ability to identify and block malicious network activity is real, but it depends entirely on the quality of its rules. Poorly designed rules lead to missed threats, alert fatigue, or both — and neither outcome does you any favors.

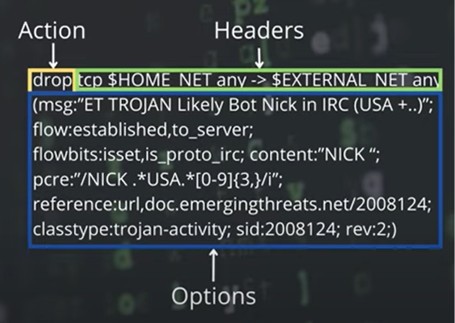

This article covers the fundamentals of writing solid Suricata rules. We’ll break down the three core components — actions, headers, and options — and look at how each one shapes what the rule does and how well it performs in a real environment.

Every organization operates with its own patterns, common practices, and network configurations. Those details, when you actually use them, can make your Suricata rules significantly more precise. Rather than treating organizational quirks as noise, treat them as intelligence. The more your rules reflect how your environment actually behaves, the harder it becomes for an attacker to operate without tripping one.

Here are some practical ways to put that context to work:

Baseline Activity Hours: If your company wraps up by 7:00 PM and doesn’t allow off-hours remote connections, create rules that flag this exactly. An unexpected outbound connection late at night is either a compromised device or an unauthorized access attempt — either way, you want to know.

Consistent Software Environments: Organizations that standardize on a specific browser or application suite can monitor for deviations from that baseline. A Suricata rule that alerts on traffic from an unauthorized browser’s user-agent string can surface policy violations, compromised endpoints, or malware using its own HTTP client.

IP Address Allocation by Function: Segmenting your network by role gives you a strong foundation for anomaly detection. If all database servers live in 192.168.0.0/24 and web servers are in 192.168.1.0/24, you can write rules that flag improbable behavior — like a database server initiating outbound internet connections, or a printer trying to talk to a domain controller.

Expected Communication Pathways: Think about normal interaction patterns in your environment. Direct client-to-client connections are often unnecessary and frequently prohibited. Even if a firewall ultimately blocks the attempt, the fact that it happened is worth capturing.

Customized Ports and Protocols: If your infrastructure runs a service on a non-standard port, traffic showing up on the default port for that protocol is worth a second look. The same goes in reverse — known ports being used for unexpected services. Build rules that reflect your actual port assignments rather than generic defaults.

The more your rules reflect your real environment, the more likely an attacker is to expose themselves just by operating normally in a space they don’t fully understand.

A Suricata rule is built on three elements: the action, the header, and the options. Each one plays a specific role in how traffic gets evaluated and what happens when a match is found.

When writing rules that will actually hold up over time, target the underlying vulnerability rather than the specific exploit used against it. Here’s why that matters:

Exploits change fast. Attackers constantly modify their tools to sidestep signature-based detection — tweaking payloads, changing patterns, re-encoding content. A rule written to catch one specific exploit variant can become useless within days.

Targeting the flaw gives you staying power. A rule that detects improper use of a protocol, malformed input, or abnormal field sizes will catch both current and future attack variants targeting the same weakness. The attacker has to actually fix the vulnerability to avoid your detection.

Precise signatures are easy to bypass. Buffer padding characters, encoding schemes, and payload structure are all things attackers can change without much effort. But they can’t change the fact that they’re exploiting a specific flaw — and that’s what your rule should be looking for.

In practice, this means shifting from “does this traffic contain these specific bytes” to “does this traffic show signs that something is being exploited.” That approach produces rules that are more durable and more useful.

The action tells Suricata what to do when traffic matches a rule. The available actions behave differently depending on whether Suricata is running in IDS or IPS mode:

| Keyword | Behavior |

|---|---|

| alert | Generates a log entry when traffic matches; does not block. Common in IDS mode. |

| pass | Allows matching traffic through without generating an alert or further inspection. |

| drop | IPS mode only. Stops processing and discards the matching packet immediately. |

| reject | IPS mode only. Discards the packet and notifies the sender via TCP reset or ICMP unreachable. |

| rejectsrc | Sends notification only to the traffic source. |

| rejectdst | Sends notification only to the traffic destination. |

| rejectboth | Sends notification to both source and destination. |

Each action serves a different purpose, ranging from passive logging to active blocking. Choosing the right one depends on what you’re trying to accomplish and whether you’re in a position to safely drop traffic.

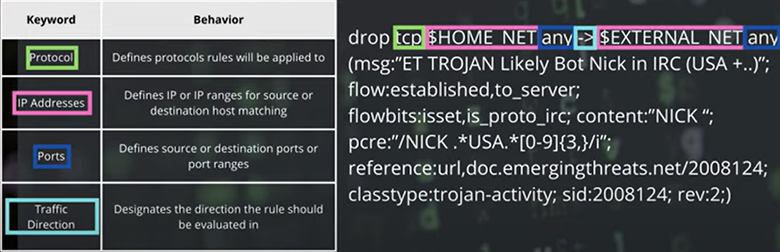

The header defines the specific traffic the rule applies to. It covers the protocol, source and destination IP addresses, ports, and the direction of traffic flow.

Getting the header right is how you avoid drowning in false positives. The more precisely you define the traffic characteristics, the more targeted your detection becomes.

Suricata supports a wide range of network and application layer protocols:

Basic Protocols

| Protocol |

|---|

| tcp |

| udp |

| icmp |

| ip |

Application Protocols

| Protocol | Protocol | Protocol |

|---|---|---|

| http | ftp | tls (ssl) |

| smb | dns | dcerpc |

| ssh | smtp | imap |

| nfs | ikev2 | krb5 |

| ntp | dhcp | rfb |

| rdp | snmp | tftp |

| sip | http2 |

Industrial Protocols (must be enabled in config file)

| Protocol |

|---|

| modbus |

| dnp3 |

| enip |

Worth noting: the industrial protocols don’t work out of the box. You’ll need to enable them in your Suricata configuration file before writing rules against them.

IP addresses in rules can be single addresses, ranges, variables, or negated values:

| Type | Example |

|---|---|

| Single IP | 1.2.3.4 |

| CIDR Range | 192.168.0.0/24 |

| $HOME_NET | Defined in config |

| $EXTERNAL_NET | Defined in config |

| Negation | !192.168.1.5 |

| Grouping | [HOME_NET, 192.168.0.0/24] |

| Any IP | any |

The same logic applies to ports:

| Type | Example |

|---|---|

| Single Port | 80 |

| Any Port | any |

| Port Range | 100:102 |

| Negation | !80 |

| Grouping | [100:120,!101] |

The grouping operator gives you a lot of flexibility for targeting precise port ranges while excluding specific exceptions within them.

Not every organization keeps its HTTP traffic on 80, 443, or 8080. Some run essential services on non-standard ports — sometimes for legacy reasons, sometimes by design. Attackers know that most defensive tools are weighted toward monitoring well-known ports, so they’ll sometimes use lesser-used ones to stay under the radar.

Build rules that account for this:

This kind of targeted monitoring can surface internal reconnaissance, unauthorized tunneling, or configuration changes that port-based surveillance would otherwise miss.

| Operator | Meaning |

|---|---|

| -> | Unidirectional (source to destination) |

| <> | Bidirectional |

This is where the real work happens. Options let you define precisely what the rule is looking for at the content level. A few things to keep in mind before you start:

Here’s a real-world example showing how options work together:

| Keyword | Value | Description |

|---|---|---|

| msg | “ET TROJAN Likely Bot Nick in IRC (USA +..)” | Text sent when the alert fires |

| flow | established,to_server; | Matches established connections to the server |

| flowbits | isset,is_proto_irc | Only runs when is_proto_irc bit is set |

| content | “NICK “ | Content the signature matches against |

| pcre | “/NICK .USA.[0-9]{3,}/i” | Perl-compatible regex against the packet body |

| reference | url,doc.emergingthreats.net/2008124 | Reference link for the alert |

| classtype | trojan-activity | Classification metadata |

| sid | 2008124 | Signature ID |

| rev | 2 | Signature revision |

Options can handle:

msg:"Detected suspicious URL access";flow:established,to_server;flowbits:set,suspicious_behavior; and flowbits:isset,suspicious_behavior;content:"/login"; http_uri;pcre:"/\bselect.+from\b/i";reference:url,www.example.com;A lot of early buffer overflow detection rules made the same mistake: they looked for long strings of repeated characters (like a block of “A”s) in HTTP traffic. That approach works exactly once. Attackers change the characters, break up the pattern, or encode the payload — and suddenly the rule is worthless.

Weak rule approach: Match on content:"AAAAAAAAAAAAAA" and throw a generic alert. An attacker using “BBBBBBBBBB” walks right past it.

The better approach is to focus on the indicators of exploitation itself — things like field lengths that violate protocol norms, malformed request structures, or input sizes that have no business being that large in a legitimate request. Regular expressions and byte_test operators can help enforce these kinds of constraints, and the resulting rule holds up against payload variations because it’s targeting the behavior, not the content.

Target the predictable anomalies that result from exploitation. The specific payload is temporary. The underlying flaw it’s targeting is not.

Honeytokens are one of the more underutilized tricks in the detection toolkit. The idea is simple: plant fake credentials, records, or data in your environment, then watch for anything that touches them. Legitimate users and systems won’t interact with bait you’ve hidden in places they have no reason to look. Attackers will.

A common implementation is renaming your actual administrator account to something less obvious and creating a fake “Administrator” account that never sees legitimate use. A Suricata rule that alerts on any authentication attempt, email, or credential use associated with that decoy account gives you a high-confidence indicator that something’s wrong — because no real admin would be logging in with it.

The same approach scales to databases. Inject fake user records, fake credit card numbers, or counterfeit proprietary documents, then write rules to detect those specific values appearing in network traffic. If any fragment of that decoy data shows up leaving your network, you have a concrete, high-fidelity signal — not a probabilistic guess.

Honeytokens give attackers enough rope to expose themselves before they’ve done any real damage.

The basics covered here are the foundation, but Suricata’s rule language goes much deeper. Once you start working with the options section, you’ll find tools for regex-based pattern detection, protocol-specific parsing (like uricontent for HTTP), and binary data matching using byte and hex values. That flexibility means you can write rules to catch everything from suspicious SQL patterns to encoded payloads hiding in HTTP traffic.

For more advanced detection, try combining multiple options and protocols in a single rule. Layer 7 protocol keywords, application-specific content parsing, and industrial protocol support (once enabled in your config) open up a lot of possibilities — whether you’re matching on IRC nicknames, examining byte sequences, or parsing DNS queries.

Signature IDs and revision numbers are worth treating seriously, not just filling in as an afterthought. They’re your version control for rules and matter when you’re tracking changes or sharing signatures across a team.

The best way to build real fluency here is to practice on actual traffic. Grab some sample PCAP files and work through writing rules against real data. For OT environments specifically, the ICS-pcap repository on GitHub (https://github.com/automayt/ICS-pcap) is a solid starting point with captures from industrial protocols.

And if you need help with threat hunting, triage, or incident response in your environment, we’re a contact form away.

Writing good Suricata rules requires more than knowing the syntax. It takes an understanding of what normal looks like in your environment and what genuinely shouldn’t be there. Rules built on that foundation are more precise, generate less noise, and hold up better as threats change over time. The technical structure gives you the tools — your operational knowledge of the environment is what makes them sharp.

What are the primary applications of Suricata? Suricata is primarily used as an Intrusion Detection System (IDS), Intrusion Prevention System (IPS), and Network Security Monitoring (NSM) tool to observe and secure network traffic.

Can Suricata function as a replacement for a traditional firewall? No. Suricata offers detection and prevention capabilities, but it operates at a different layer and complements rather than replaces the access control functions of a firewall.

What are effective strategies for minimizing false positives in Suricata alerts? Write precise headers that target specific traffic patterns, and use flow and context-aware options to tighten the matching criteria. The more your rules reflect actual expected behavior in your environment, the fewer false positives you’ll see.

Does Suricata support industrial control system protocols? Yes — Suricata can monitor industrial protocols including Modbus, DNP3, and ENIP. These protocols are not enabled by default and must be turned on in the Suricata configuration file before you can write rules against them.

Is Suricata capable of detecting threats within encrypted network traffic? Suricata can analyze metadata and behavioral patterns associated with encrypted traffic, but deep packet inspection of encrypted content requires TLS decryption or indirect detection techniques based on observable characteristics like connection timing, certificate anomalies, or traffic volume patterns.